Vision Algorithms for Embedded Vision

Most computer vision algorithms were developed on general-purpose computer systems with software written in a high-level language

Most computer vision algorithms were developed on general-purpose computer systems with software written in a high-level language. Some of the pixel-processing operations (ex: spatial filtering) have changed very little in the decades since they were first implemented on mainframes. With today’s broader embedded vision implementations, existing high-level algorithms may not fit within the system constraints, requiring new innovation to achieve the desired results.

Some of this innovation may involve replacing a general-purpose algorithm with a hardware-optimized equivalent. With such a broad range of processors for embedded vision, algorithm analysis will likely focus on ways to maximize pixel-level processing within system constraints.

This section refers to both general-purpose operations (ex: edge detection) and hardware-optimized versions (ex: parallel adaptive filtering in an FPGA). Many sources exist for general-purpose algorithms. The Embedded Vision Alliance is one of the best industry resources for learning about algorithms that map to specific hardware, since Alliance Members will share this information directly with the vision community.

General-purpose computer vision algorithms

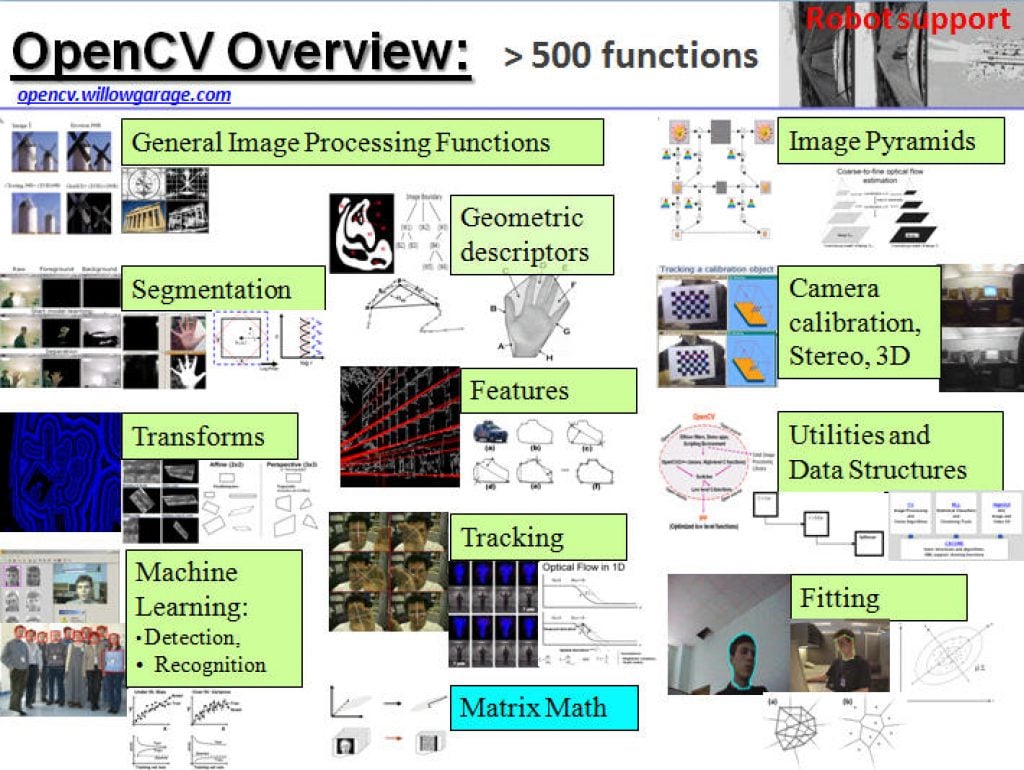

One of the most-popular sources of computer vision algorithms is the OpenCV Library. OpenCV is open-source and currently written in C, with a C++ version under development. For more information, see the Alliance’s interview with OpenCV Foundation President and CEO Gary Bradski, along with other OpenCV-related materials on the Alliance website.

Hardware-optimized computer vision algorithms

Several programmable device vendors have created optimized versions of off-the-shelf computer vision libraries. NVIDIA works closely with the OpenCV community, for example, and has created algorithms that are accelerated by GPGPUs. MathWorks provides MATLAB functions/objects and Simulink blocks for many computer vision algorithms within its Vision System Toolbox, while also allowing vendors to create their own libraries of functions that are optimized for a specific programmable architecture. National Instruments offers its LabView Vision module library. And Xilinx is another example of a vendor with an optimized computer vision library that it provides to customers as Plug and Play IP cores for creating hardware-accelerated vision algorithms in an FPGA.

Other vision libraries

- Halcon

- Matrox Imaging Library (MIL)

- Cognex VisionPro

- VXL

- CImg

- Filters

More than 500 AI Models Run Optimized on Intel Core Ultra Processors

Intel builds the PC industry’s most robust AI PC toolchain and presents an AI software foundation that developers can trust. What’s New: Today, Intel announced it surpassed 500 AI models running optimized on new Intel® Core™ Ultra processors – the industry’s premier AI PC processor available in the market today, featuring new AI experiences, immersive graphics

Mixed Messages on MaaS Market Readiness: An Analysis of New Driverless Vehicle Testing Data From the California DMV

Over the last three to four years, the driverless robotaxi industry has begun to flourish. Driverless services are coming online in multiple cities across the US and China. IDTechEx‘s recent report, “Future Automotive Technologies 2024-2034: Applications, Megatrends, Forecasts“, predicts that the driverless robotaxi industry will be generating over US$470 billion annually through services in 2034.

Moving Pictures: Transform Images Into 3D Scenes With NVIDIA Instant NeRF

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Learn how the AI research project helps artists and others create 3D experiences from 2D images in seconds. Editor’s note: This post is part of the AI Decoded series, which demystifies AI by making the technology more

2024 Embedded Vision Summit Showcase: Keynote Presentation

Check out the keynote presentation “Learning to Understand Our Multimodal World with Minimal Supervision” at the upcoming 2024 Embedded Vision Summit, taking place May 21-23 in Santa Clara, California! The field of computer vision is undergoing another profound change. Recently, “generalist” models have emerged that can solve a variety of visual perception tasks. Also known

2024 Embedded Vision Summit Showcase: Expert Panel Discussion

Check out the expert panel discussion “Multimodal LLMs at the Edge: Are We There Yet?” at the upcoming 2024 Embedded Vision Summit, taking place May 21-23 in Santa Clara, California! The Summit is the premier conference for innovators incorporating computer vision and edge AI in products. It attracts a global audience of technology professionals from

2024 Embedded Vision Summit Showcase: Qualcomm General Session Presentation

Check out the general session presentation “What’s Next in On-Device Generative AI” at the upcoming 2024 Embedded Vision Summit, taking place May 21-23 in Santa Clara, California! The generative AI era has begun! Large multimodal models are bringing the power of language understanding to machine perception, and transformer models are expanding to allow machines to

2024 Embedded Vision Summit Showcase: Network Optix General Session Presentation

Check out the general session presentation “Scaling Vision-Based Edge AI Solutions: From Prototype to Global Deployment” at the upcoming 2024 Embedded Vision Summit, taking place May 21-23 in Santa Clara, California! The Embedded Vision Summit brings together innovators in silicon, devices, software and applications and empowers them to bring computer vision and perceptual AI into

Navigating the Future: How Avnet is Addressing Challenges in AMR Design

This blog post was originally published at Avnet’s website. It is reprinted here with the permission of Avnet. Autonomous mobile robots (AMRs) are revolutionizing industries such as manufacturing, logistics, agriculture, and healthcare by performing tasks that are too dangerous, tedious, or costly for humans. AMRs can navigate complex and dynamic environments, communicate with other devices

Embedded Vision Summit® Announces Full Conference Program for Edge AI and Computer Vision Innovators, May 21-23 in Santa Clara, California

The premier event for product creators incorporating computer vision and edge AI in products and applications SANTA CLARA, Calif., April 29, 2024 /PR Newswire/ — The Edge AI and Vision Alliance, a worldwide industry partnership, today announced the full program for the 2024 Embedded Vision Summit, taking place May 21-23 at the Santa Clara Convention

On Finding CLIKA: the Founders’ Journey

This blog post was originally published at CLIKA’s website. It is reprinted here with the permission of CLIKA. CLIKA, a tinyAI startup, was founded based on the realization that the future of artificial intelligence (AI) would depend on how well and quickly businesses would be able to scale and productionize their AI. Ben Asaf was

Unleashing the Potential for Assisted and Automated Driving Experiences Through Scalability

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. Working within an ecosystem of innovators and suppliers is paramount to addressing the challenge of building a scalable ADAS solution While the recent sentiment around fully autonomous vehicles is not overly positive, more and more vehicles on

The Building Blocks of AI: Decoding the Role and Significance of Foundation Models

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. These neural networks, trained on large volumes of data, power the applications driving the generative AI revolution. Editor’s note: This post is part of the AI Decoded series, which demystifies AI by making the technology more accessible,

Oriented FAST and Rotated BRIEF (ORB) Feature Detection Speeds Up Visual SLAM

This blog post was originally published at Ceva’s website. It is reprinted here with the permission of Ceva. In the realm of smart edge devices, signal processing and AI inferencing are intertwined. Sensing can require intense computation to filter out the most significant data for inferencing. Algorithms for simultaneous localization and mapping (SLAM), a type

Achieving a Zero-incident Vision In Your Warehouse with Dragonfly

This blog post was originally published by Onit. It is reprinted here with the permission of Onit. At Onit, we’re revolutionizing the efficiency and safety standards in warehouse environments through edge AI and computer vision. Leveraging our state-of-the-art Dragonfly and RTLS (real-time locating system) applications, we address the complex challenges inherent in chaotic and labor-intensive

Democratizing AI: Top 5 Insights from Axios, Meta, Black Magic Design, and Our Panel of Industry Titans

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. In a panel discussion at our annual Snapdragon Summit in the breathtaking setting of Maui, Hawaii, we had the privilege of engaging in a dynamic conversation with four esteemed experts about the democratization of artificial intelligence (AI).

AI Decoded: Demystifying Large Language Models, the Brains Behind Chatbots

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Explore what LLMs are, why they matter and how to use them. Editor’s note: This post is part of our AI Decoded series, which aims to demystify AI by making the technology more accessible, while showcasing new