A version of this article was previously published in the February/March edition of Imaging and Machine Vision Europe. It is reprinted here with the permission of Imaging and Machine Vision Europe.

We started the Embedded Vision Alliance in 2011 because we believed that there would soon be unprecedented growth in investment, innovation and deployment of practical computer vision technology across a broad range of markets. Less than a decade later, our expectation has been fulfilled. For example, data presented by Woodside Capital at the 2018 Embedded Vision Summit documents 100x growth in U.S.A.- and China-based vision companies over the past 6 years. These investments (and others not reflected in this data, such as investments taking place in other countries and companies’ internal investments) are enabling companies to rapidly accelerate their vision-related research, development and deployment activities.

To help understand technology choices and trends in the development of vision-based systems, devices and applications, the Embedded Vision Alliance conducts an annual survey of product developers. In the most recent iteration of this survey, completed in November 2018, 93% of respondents reported that they expect an increase in their organization’s vision-related activity over the coming year (61% expect a large increase). Of course, these companies expect their increasing development investments to translate into increased revenue. Market researchers agree. For example, market research by Tractica forecasts a 25x increase in revenue for computer vision hardware, software and services between now and 2025, reaching US$26B.

Three fundamental factors are driving the accelerating proliferation of visual perception:

- It increasingly works well enough for diverse, real-world applications

- It can be deployed at low cost and with low power consumption

- It's increasingly usable by non-specialists

Four key trends underlie these factors:

- Deep learning

- 3D perception

- Fast, inexpensive, energy-efficient processors

- The democratization of hardware and software

Deep Learning

Traditionally, computer vision applications have relied on highly specialized algorithms painstakingly designed for each specific application and use case. In contrast to traditional algorithms, deep learning uses generalized learning algorithms which are trained through examples. DNNs have transformed computer vision, delivering superior results on tasks such as recognizing objects, localizing objects within a frame and determining which pixels belong to which object. Even problems previously considered “solved” with conventional techniques are now finding better solutions using deep learning techniques.

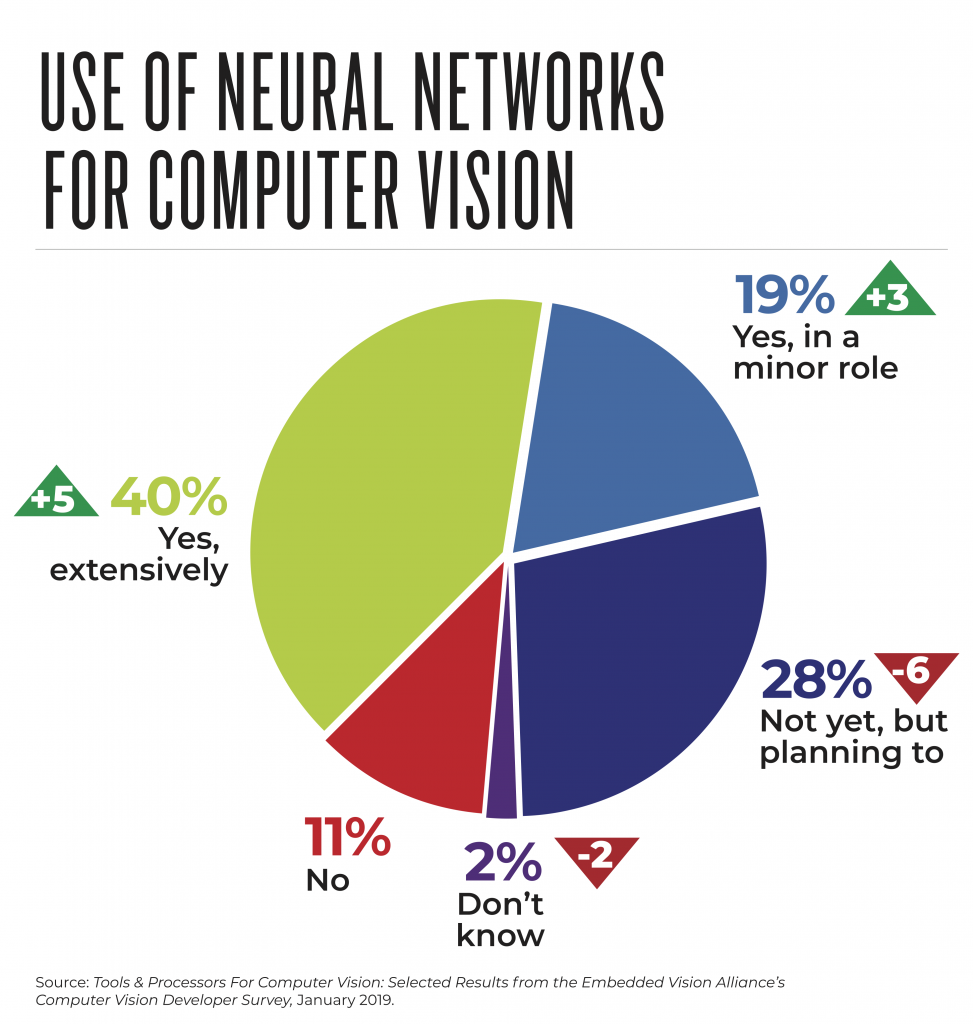

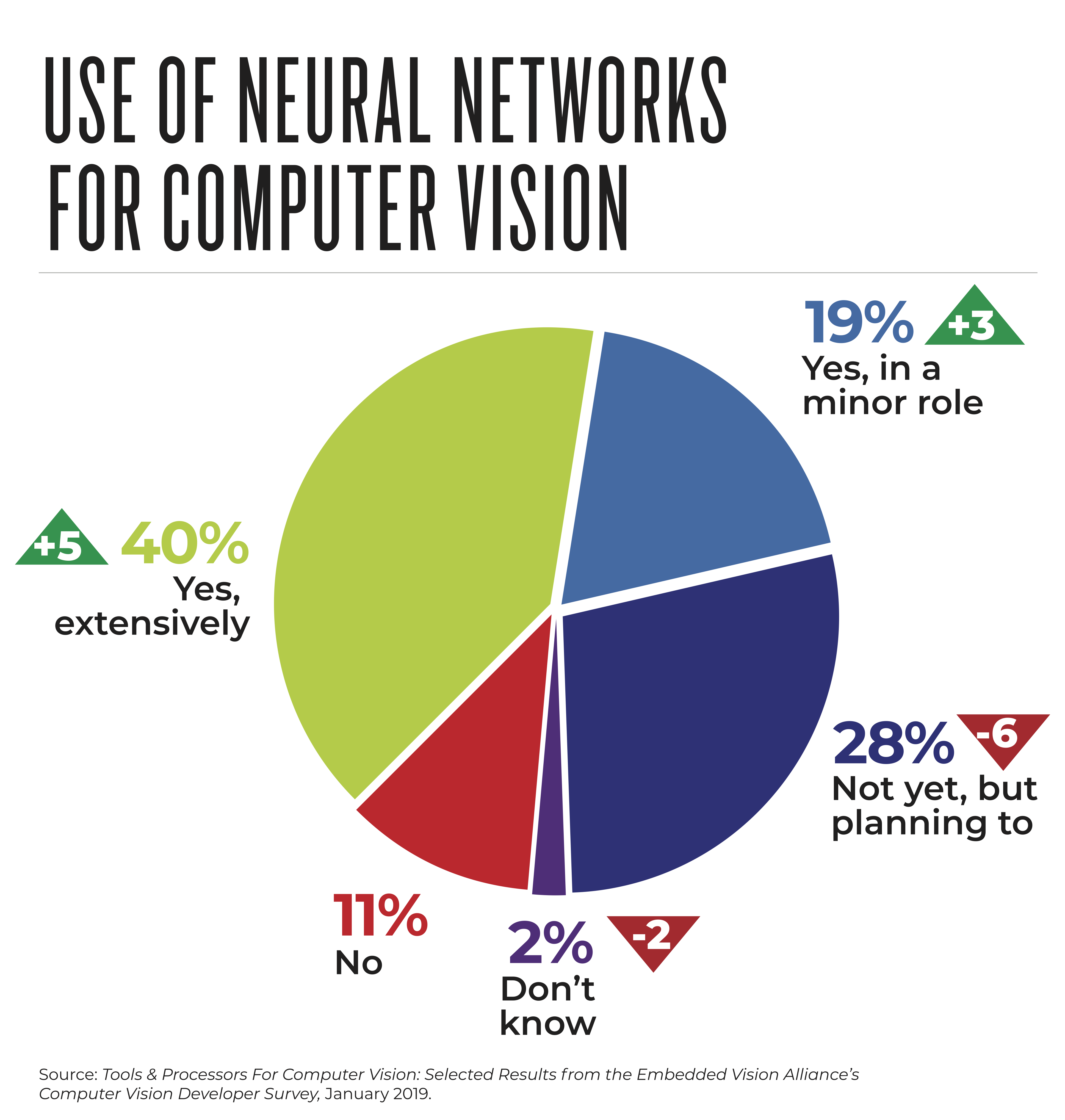

As a result, computer vision developers are increasingly adopting deep learning techniques. In the Alliance's most recent survey, 59% of vision system developers are already using DNNs (an increase from 34% two years ago). Another 28% are planning to use DNNs for visual intelligence in the near future (Figure 1).

Figure 1. Conducted in November 2018, the Computer Vision Developer Survey from the Embedded Vision Alliance surveyed professional developers of computer vision-based systems and applications. Fifty-nine percent of surveyed developers reported that they are currently using deep learning for visual perception, an increase from 51% a year earlier. (Figures within triangles indicate the change, in percentage points, from the November 2017 Survey to the November 2018 Survey.)

3D Perception

The 2D image sensors found in many vision systems enable a tremendous breadth of vision capabilities. But adding depth information can be extremely valuable. For example, a gesture-based user interface, the ability to discern not only lateral motion, but also motion perpendicular to the sensor, greatly expands the variety of gestures that a system can recognize.

In other applications, depth information enhances accuracy. In face recognition, for example, depth sensing is valuable in determining that the object being sensed is an actual face, versus a photograph. And the value of depth information is obvious in moving systems, such as mobile robots and automobiles.

Historically, depth sensing has been an exotic, expensive technology, but this has changed dramatically in the past few years. The use of optical depth sensors in the Microsoft Kinect, and more recently in mobile phones, has catalyzed a rapid acceleration in innovation, resulting in depth sensors that are tiny, inexpensive and energy-efficient.

This change has not been lost on system developers. Thirty-four percent of developers participating in the Alliance’s most recent survey are already using depth perception, with another 29% (up from 21% a year ago) planning to incorporate depth in upcoming projects across a broad range of industries.

Fast, Inexpensive, Energy-efficient Processors

Arguably, the most important ingredient driving the widespread deployment of visual perception is better processors. Vision algorithms typically have huge appetites for computing performance. Achieving the required levels of performance with acceptable cost and power consumption is a common challenge, particularly as vision is deployed into cost-sensitive and battery-powered devices.

Fortunately, in the past few years there’s been an explosion in the development of processors tuned for computer vision applications. These purpose-built processors are now coming to market, delivering huge improvements in performance, cost, energy efficiency and ease of development.

Progress in efficient processors has been boosted by the growing adoption of deep learning for two reasons. First, deep learning algorithms tend to require even more processing performance than conventional computer vision algorithms, amplifying the need for more efficient processors. Second, the most widely used deep learning algorithms share many common characteristics, which simplifies the task of designing a specialized processor intended to execute these algorithms very efficiently. (In contrast, conventional computer vision algorithms exhibit extreme diversity.)

Today, typically, computer vision algorithms use a combination of a general-purpose CPU and a specialized parallel co-processor. Historically, GPUs have been the most popular type of co-processor, because they were widely available and supported with good programming tools. These days, there’s a much wider range of co-processor options, with newer types of co-processors typically offering significantly better efficiency compared to GPUs. The trade-off is that these newer processors are less widely available, less familiar to developers and not yet as well supported by mature development tools.

According to the most recent Embedded Vision Alliance developer survey, nearly one-third of respondents are using deep learning-specific co-processors. This is remarkable, considering that deep learning-specific processors didn't exist a few years ago.

Democratization of Computer Vision

By “democratization,” we mean that it's becoming much easier to develop computer vision-based algorithms, systems and applications, as well as to deploy these solutions at scale – enabling many more developers and organizations to incorporate vision into their systems. What is driving this trend? One answer is the rise of deep learning. Because of the generality of deep learning algorithms, with deep learning there’s less of a need to develop specialized algorithms. Instead, developer focus can shift to selecting among available algorithms, and then to obtaining the necessary quantities of training data.

Another critical factor in simplifying computer vision development and deployment is the rise of cloud computing. For example, rather than spending days or weeks installing and configuring development tools, today engineers can get instant access to pre-configured development environments in the cloud. Likewise, when large amounts of compute power are required to train or validate a neural network algorithm, this compute power can be quickly and economically obtained in the cloud. And cloud computing offers an easy path for initial deployment of many vision-based systems, even in cases where ultimately developers will switch to edge-based computing, for example to reduce costs.

The most recent Embedded Vision Alliance developer survey found that 75% of respondents using deep neural networks for visual understanding in their products deploy those neural networks at the edge, while 42% use the cloud. (These figures total to more than 100% because some survey respondents use both approaches.)

The Embedded Vision Summit

The world of practical computer vision is changing very fast – opening up many exciting technical and business opportunities. An excellent way to learn about the latest developments in practical computer vision is to attend the Embedded Vision Summit, taking place May 20-23, 2019 in Santa Clara, California. The Summit attracts a global audience of over one thousand product creators, entrepreneurs and business decision-makers who are developing and using computer vision technology. It has experienced exciting growth over the last few years, with 97% of 2018 Summit attendees reporting that they’d recommend the event to a colleague. The Summit is the place to learn about the latest applications, techniques, technologies and opportunities in visual AI and deep learning. Online registration is now available. I hope to see you there!

Jeff Bier

Founder, Embedded Vision Alliance