This article was originally published at Imagination Technologies' website, where it is one of a series of articles. It is reprinted here with the permission of Imagination Technologies.

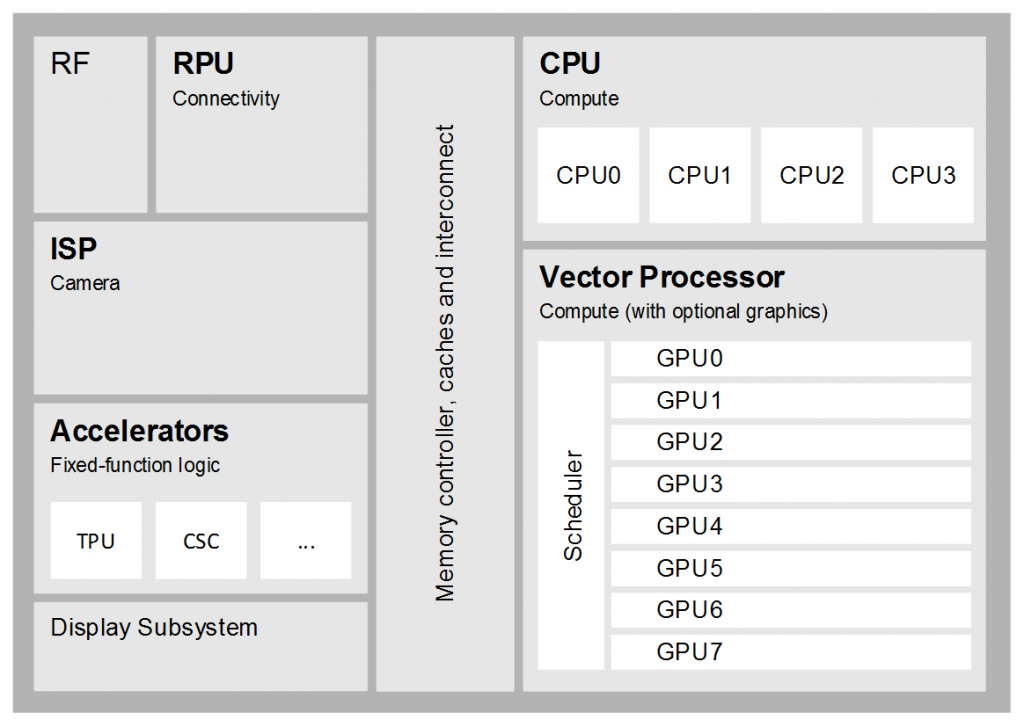

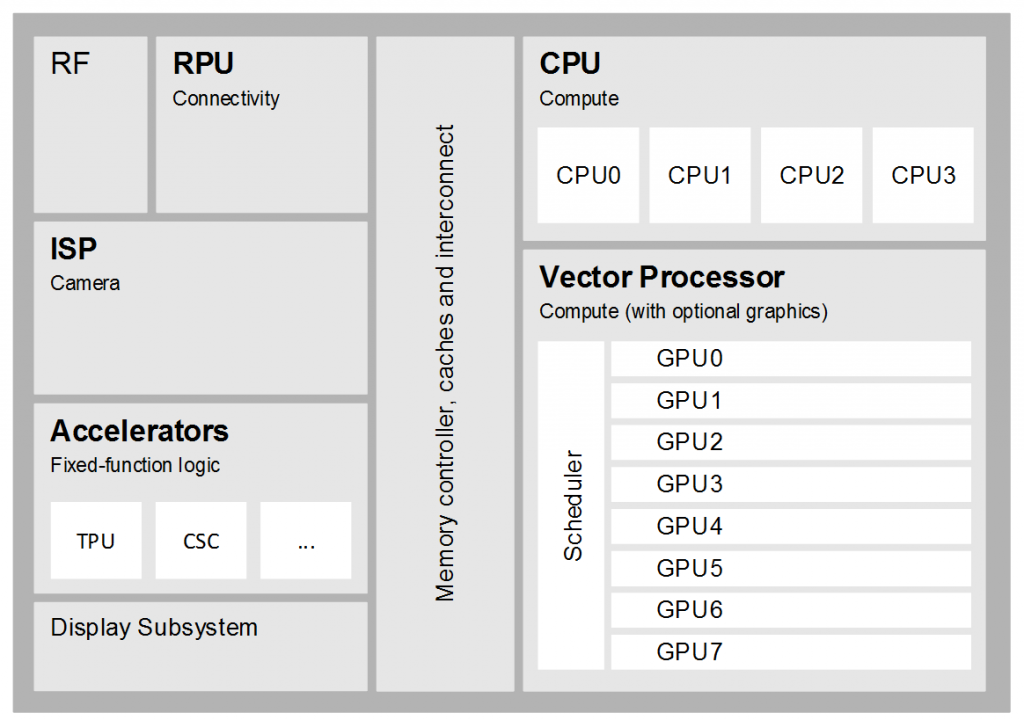

Modern mobile application processors are highly heterogeneous, combing a variety of different hardware components optimized for different tasks. As shown in the figure below, a processor designed for vision might include an Image Signal Processor (ISP) for acquiring image sensor data, a vector processor such as a GPU for efficient data-parallel operation on pixels and feature vectors, and a CPU for implementing higher-level heuristics and decision-making. A sensor-fusion device might further combine vision processing with complementary technologies such as radar, ultrasonic, sonar and LIDAR, as well as input from compasses, accelerometers and wheel encoders.

Components of a modern vision processor

Imagination provides all of the hardware components needed to build efficient vision products today, including:

Image Signal Processor

The PowerVR Raptor ISP can perform many common image pre-processing tasks such as noise reduction and normalizing colour and gamma values. When combined with a PowerVR GPU, areas of an image can be de-warped on-the-fly into on-chip local memory for onward GPU compute processing.

Vector Processor (GPU)

PowerVR GPUs are high-performance vector processors that can efficiently execute many data-parallel computations at low power. As explained in a previous article, convolution filters can be highly optimized for parallel execution with local memory re-use, as well as other tasks such as constructing an image pyramid or integral image. Baidu’s engineers applied similar techniques to offload a convolution neural network from the CPU to PowerVR GPU in a handset using a MediaTek MT6595 based SoC, improving matching performance while also increasing battery life of the handset from a couple of hours to a full day’s use.

Accelerators

As well as supporting efficient vector processing, PowerVR GPUs provide several hardware accelerators that can be leveraged by vision algorithms. The Texture Processing Unit (TPU) supports efficient sampling of image pixels from memory combined with optional interpolation, for example to prevent aliasing during image registration. The colour-space conversion unit supports on-the-fly conversion of sampled pixels for cases where luminosity data alone is insufficient, for example when detecting skin colour in a face or searching for colours in road signs.

Some products support hardware acceleration of more specific vision tasks, for example the OMAP4470 processor from Texas Instruments includes a hardware face detector. Vadaro chose this processor to create their retail analytics camera, utilizing the face detection hardware to identify shoppers. The resulting coordinates are then fed into an SVM classifier that identifies customer profiles such as age and gender, and which is optimized by offloading compute operations such as dot products to the PowerVR GPU. Similarly, some automotive devices contain hardware to accelerate common tasks such as HOG detection. In general the decision to commit a specific task to hardware is usually taken after considering its likely use across all users of the device and also whether the algorithm may benefit from being adapted in the near future, for example as a result of improvements in computer vision research.

Hopefully this article has provided a short overview of the on-chip processing blocks that are relevant to computer vision applications.

Further reading

Computer vision is what we’ll continue to focus on for the next section of our heterogeneous compute series; stay tuned for an overview of how you can use OpenCL on PowerVR for face detection.

By Doug Watt

Multimedia Strategy Manager, Imagination Technologies