Development Tools for Embedded Vision

ENCOMPASSING MOST OF THE STANDARD ARSENAL USED FOR DEVELOPING REAL-TIME EMBEDDED PROCESSOR SYSTEMS

The software tools (compilers, debuggers, operating systems, libraries, etc.) encompass most of the standard arsenal used for developing real-time embedded processor systems, while adding in specialized vision libraries and possibly vendor-specific development tools for software development. On the hardware side, the requirements will depend on the application space, since the designer may need equipment for monitoring and testing real-time video data. Most of these hardware development tools are already used for other types of video system design.

Both general-purpose and vender-specific tools

Many vendors of vision devices use integrated CPUs that are based on the same instruction set (ARM, x86, etc), allowing a common set of development tools for software development. However, even though the base instruction set is the same, each CPU vendor integrates a different set of peripherals that have unique software interface requirements. In addition, most vendors accelerate the CPU with specialized computing devices (GPUs, DSPs, FPGAs, etc.) This extended CPU programming model requires a customized version of standard development tools. Most CPU vendors develop their own optimized software tool chain, while also working with 3rd-party software tool suppliers to make sure that the CPU components are broadly supported.

Heterogeneous software development in an integrated development environment

Since vision applications often require a mix of processing architectures, the development tools become more complicated and must handle multiple instruction sets and additional system debugging challenges. Most vendors provide a suite of tools that integrate development tasks into a single interface for the developer, simplifying software development and testing.

Unleashing the Potential for Assisted and Automated Driving Experiences Through Scalability

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. Working within an ecosystem of innovators and suppliers is paramount to addressing the challenge of building a scalable ADAS solution While the recent sentiment around fully autonomous vehicles is not overly positive, more and more vehicles on

The Building Blocks of AI: Decoding the Role and Significance of Foundation Models

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. These neural networks, trained on large volumes of data, power the applications driving the generative AI revolution. Editor’s note: This post is part of the AI Decoded series, which demystifies AI by making the technology more accessible,

Oriented FAST and Rotated BRIEF (ORB) Feature Detection Speeds Up Visual SLAM

This blog post was originally published at Ceva’s website. It is reprinted here with the permission of Ceva. In the realm of smart edge devices, signal processing and AI inferencing are intertwined. Sensing can require intense computation to filter out the most significant data for inferencing. Algorithms for simultaneous localization and mapping (SLAM), a type

Achieving a Zero-incident Vision In Your Warehouse with Dragonfly

At Onit, we’re revolutionizing the efficiency and safety standards in warehouse environments through edge AI and computer vision. Leveraging our state-of-the-art Dragonfly and RTLS (real-time locating system) applications, we address the complex challenges inherent in chaotic and labor-intensive operations. Our Dragonfly technologies are taking workplace safety to new heights with the latest release of Dragonfly

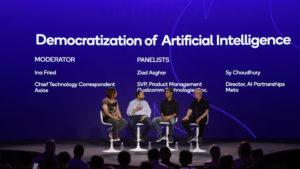

Democratizing AI: Top 5 Insights from Axios, Meta, Black Magic Design, and Our Panel of Industry Titans

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. In a panel discussion at our annual Snapdragon Summit in the breathtaking setting of Maui, Hawaii, we had the privilege of engaging in a dynamic conversation with four esteemed experts about the democratization of artificial intelligence (AI).

AI Decoded: Demystifying Large Language Models, the Brains Behind Chatbots

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Explore what LLMs are, why they matter and how to use them. Editor’s note: This post is part of our AI Decoded series, which aims to demystify AI by making the technology more accessible, while showcasing new

Edge AI and Vision Alliance Conversation with GenAI Nerds on Generative AI At the Edge

Kerry Shih of GenAI Nerds interviews Jeff Bier, Founder of the Edge AI and Vision Alliance, and Phil Lapsley, the Alliance’s Vice President of Business Development, about the opportunities and trends for generative AI at the edge. Shih, Bier and Lapsley discuss topics such as: Where we are in the generative AI hype cycle What

Microchip Technology Acquires Neuronix AI Labs

Innovative technology enhances AI-enabled intelligent edge solutions and increases neural networking capabilities CHANDLER, Ariz., April 15, 2024 — Microchip Technology (Nasdaq: MCHP) has acquired Neuronix AI Labs to expand its capabilities for power-efficient, AI-enabled edge solutions deployed on field programmable gate arrays (FPGAs). Neuronix AI Labs provides neural network sparsity optimization technology that enables a